This guide will help you set up a basic AWS VPC with a virtual machine (EC2) and database (RDS) using Terraform (Infrastructure as Code).

I'll be breaking this topic down as follows:

The outline

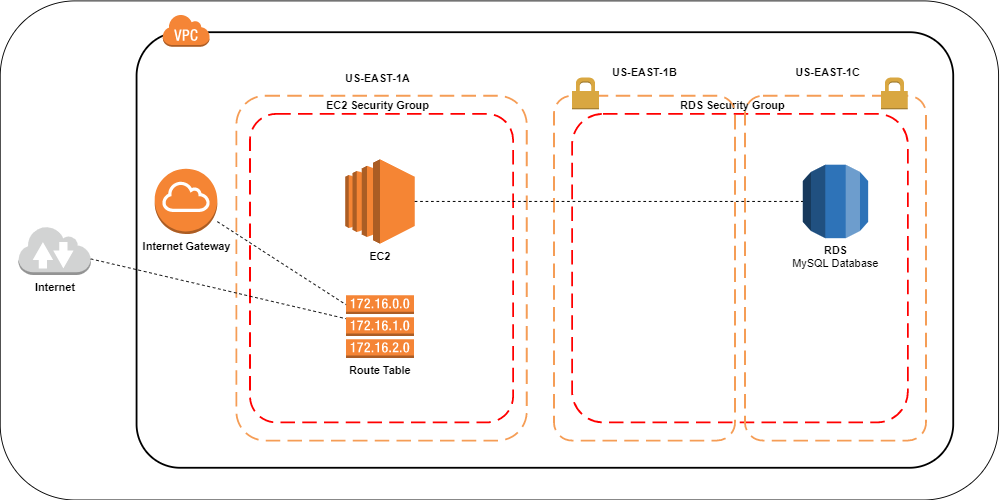

We're going to create the following on AWS:

A VPC with 1 Route table that connects the Internet Gateway to the public subnet that hosts the EC2 instance.

Two private subnets configured as 1 subnet group that hosts 1 RDS instance.

Access control is arranged using security groups, one for the EC2 public subnet and 1 for the RDS private subnets.

The reason we have 2 subnets for RDS is because that is a deployment requirement, you cannot launch an RDS instance without configuring it with 2 subnets.

Ideally, you would want to do load balancing for both EC2 and RDS instances. On the EC2 side you would have to add another subnet for the other EC2 instance and connect them with a load balancer. In case one of the subnets goes down for whatever reason, your site is still up and running.

For this article however, we're going to focus on the minimum setup for development and testing purposes.

VPC

To create a VPC we configure our module as follows:

resource "aws_vpc" "_" {

cidr_block = var.vpc_cidr

enable_dns_support = var.enable_dns_support

enable_dns_hostnames = var.enable_dns_hostnames

}

resource "aws_internet_gateway" "_" {

vpc_id = aws_vpc._.id

}

resource "aws_route_table" "_" {

vpc_id = aws_vpc._.id

dynamic "route" {

for_each = var.route

content {

cidr_block = route.value.cidr_block

gateway_id = route.value.gateway_id

instance_id = route.value.instance_id

nat_gateway_id = route.value.nat_gateway_id

}

}

}

resource "aws_route_table_association" "_" {

count = length(var.subnet_ids)

subnet_id = element(var.subnet_ids, count.index)

route_table_id = aws_route_table._.id

}

Please note; I removed the tag blocks for brevity, but you should tag every resource possible to enable easy cost tracking of your deployments and to be able to find everything should anything go wrong with the tfstate.

The route table is configured to be associated with an internet gateway in the aws_route_table_association resource. Any subnet we supply in var.subnet_ids will have access to the route table configuration and the internet gateway.

I call the VPC module like this:

module "vpc" {

source = "../../modules/vpc"

resource_tag_name = var.resource_tag_name

namespace = var.namespace

region = var.region

vpc_cidr = "10.0.0.0/16"

route = [

{

cidr_block = "0.0.0.0/0"

gateway_id = module.vpc.gateway_id

instance_id = null

nat_gateway_id = null

}

]

subnet_ids = module.subnet_ec2.ids

}

The vpc_cidr = "10.0.0.0/16" means we're creating a VPC with 65,536 possible IP addresses. See here for an explanation on the CIDR notation.

The route table is connected to the EC2 subnet via; subnet_ids = module.subnet_ec2.ids. This subnet has full access to the internet via the cidr_block configuration; "0.0.0.0/0".

EC2

To create the EC2 instance, we just need to configure what machine we want and place it in the subnet where our Route Table is present.

In our EC2 module we configure the following:

locals {

resource_name_prefix = "${var.namespace}-${var.resource_tag_name}"

}

resource "aws_instance" "_" {

ami = var.ami

instance_type = var.instance_type

user_data = var.user_data

subnet_id = var.subnet_id

associate_public_ip_address = var.associate_public_ip_address

key_name = aws_key_pair._.key_name

vpc_security_group_ids = var.vpc_security_group_ids

iam_instance_profile = var.iam_instance_profile

}

resource "aws_eip" "_" {

vpc = true

instance = aws_instance._.id

}

resource "tls_private_key" "_" {

algorithm = "RSA"

rsa_bits = 4096

}

resource "aws_key_pair" "_" {

key_name = var.key_name

public_key = tls_private_key._.public_key_openssh

}

This creates:

- AWS EC2 instance

- With an elastic IP associated with that instance

- A public/private key (PEM key) to access the instance via SSH.

Then we can call it:

module "ec2" {

source = "../../modules/ec2"

resource_tag_name = var.resource_tag_name

namespace = var.namespace

region = var.region

ami = "ami-07ebfd5b3428b6f4d" # Ubuntu Server 18.04 LTS

key_name = "${local.resource_name_prefix}-ec2-key"

instance_type = var.instance_type

subnet_id = module.subnet_ec2.ids[0]

vpc_security_group_ids = [aws_security_group.ec2.id]

vpc_id = module.vpc.id

}

Four main things we need to supply the EC2 module (among other things):

- Attach the EC2 instance to the subnet;

subnet_id = module.subnet_ec2.ids[0], - attaches the security group;

vpc_security_group_ids = [aws_security_group.ec2.id], a security group acts like a firewall. - Supply it with the VPC that it needs to be deployed in;

vpc_id = module.vpc.id - AMI identifier, here's more on how to find Amazon Machine Image (AMI) identifiers.

EC2 Security group

So far we have a VPC setup, an EC2 instance and its subnet, and we've configured a reference to the security group the EC2 subnet is using.

A security group acts like a firewall for your subnet, what is allowed to go in ingress and what is allowed to go out egress of your subnet:

resource "aws_security_group" "ec2" {

name = "${local.resource_name_prefix}-ec2-sg"

description = "EC2 security group (terraform-managed)"

vpc_id = module.vpc.id

ingress {

from_port = var.rds_port

to_port = var.rds_port

protocol = "tcp"

description = "MySQL"

cidr_blocks = local.rds_cidr_blocks

}

ingress {

from_port = 22

to_port = 22

protocol = "tcp"

description = "Telnet"

cidr_blocks = ["0.0.0.0/0"]

}

ingress {

from_port = 80

to_port = 80

protocol = "tcp"

description = "HTTP"

cidr_blocks = ["0.0.0.0/0"]

}

ingress {

from_port = 443

to_port = 443

protocol = "tcp"

description = "HTTPS"

cidr_blocks = ["0.0.0.0/0"]

}

# Allow all outbound traffic.

egress {

from_port = 0

to_port = 0

protocol = "-1"

cidr_blocks = ["0.0.0.0/0"]

}

}

We allow traffic to come in from ports; 22 (SSH), 80 (HTTP), 443 (HTTPS), and we allow ALL traffic on all ports to go out. If you want to further tighten this down, profile which ports your application uses for outbound traffic to increase security.

For ingress you can further tighten this down by supplying a specific IP address that is allowed to connect on port 22.

RDS

We're almost done with the setup, only our database subnet and instance with security group needs to be configured.

locals {

resource_name_prefix = "${var.namespace}-${var.resource_tag_name}"

}

resource "aws_db_subnet_group" "_" {

name = "${local.resource_name_prefix}-${var.identifier}-subnet-group"

subnet_ids = var.subnet_ids

}

resource "aws_db_instance" "_" {

identifier = "${local.resource_name_prefix}-${var.identifier}"

allocated_storage = var.allocated_storage

backup_retention_period = var.backup_retention_period

backup_window = var.backup_window

maintenance_window = var.maintenance_window

db_subnet_group_name = aws_db_subnet_group._.id

engine = var.engine

engine_version = var.engine_version

instance_class = var.instance_class

multi_az = var.multi_az

name = var.name

username = var.username

password = var.password

port = var.port

publicly_accessible = var.publicly_accessible

storage_encrypted = var.storage_encrypted

storage_type = var.storage_type

vpc_security_group_ids = ["${aws_security_group._.id}"]

allow_major_version_upgrade = var.allow_major_version_upgrade

auto_minor_version_upgrade = var.auto_minor_version_upgrade

final_snapshot_identifier = var.final_snapshot_identifier

snapshot_identifier = var.snapshot_identifier

skip_final_snapshot = var.skip_final_snapshot

performance_insights_enabled = var.performance_insights_enabled

}

There are a lot of options here, lets grab the tfvars file to see what most of these variables contains:

# RDS

rds_identifier = "mysql"

rds_engine = "mysql"

rds_engine_version = "8.0.15"

rds_instance_class = "db.t2.micro"

rds_allocated_storage = 10

rds_storage_encrypted = false # not supported for db.t2.micro instance

rds_name = "" # use empty string to start without a database created

rds_username = "admin" # rds_password is generated

rds_port = 3306

rds_maintenance_window = "Mon:00:00-Mon:03:00"

rds_backup_window = "10:46-11:16"

rds_backup_retention_period = 1

rds_publicly_accessible = false

rds_final_snapshot_identifier = "prod-trademerch-website-db-snapshot" # name of the final snapshot after deletion

rds_snapshot_identifier = null # used to recover from a snapshot

rds_performance_insights_enabled = true

A few notes on the configuration I used here;

- Instance sizing and encryption: for production make sure you use an instance size that is larger than a

db.t2.microsuch that you can use encryption on the storage layer. - Maintenance window: This day and time setting is used for patching of your instance.

- Public access: Make sure to set public access off for obvious reasons, but this should already be the case anyway if your instance is hosted in a private subnet.

- Backups: Two things regarding backups:

4.1) Providing the final snapshot identifier is useful when destroying the environment, it will automatically create a snapshot with the given name. If you do no supply this variable, you wont be able to remove the RDS instance with the

terraform destroycommand and you'll have to do this manually(!). 4.2) RDS supports automated backups, make sure to set the retention period (in days) correctly. - Query tracing: To enable in depth tracing of your queries and performance statistics, set

performance_insights_enabledtotrue. This is very useful in analyzing slow queries and, generally, query performance. - Database password: The password for the database is generated, this can be done with this resource:

resource "random_string" "password" {

length = 16

special = false

}

RDS Security group

Finally, we need to supply the security group configuration for RDS such that EC2 can communicate with our Database.

resource "aws_security_group" "_" {

name = "${local.resource_name_prefix}-rds-sg"

description = "RDS (terraform-managed)"

vpc_id = var.rds_vpc_id

# Only MySQL in

ingress {

from_port = var.port

to_port = var.port

protocol = "tcp"

cidr_blocks = var.sg_ingress_cidr_block

}

# Allow all outbound traffic.

egress {

from_port = 0

to_port = 0

protocol = "-1"

cidr_blocks = var.sg_egress_cidr_block

}

}

The above allows ingress from port 3306 and egress everything.

Conclusion

I hope this has been useful as a primer to understand how a simple AWS VPC is set-up.

Questions? Ask me on Twitter.

See you in the next Article, thanks for reading!